| Saber Talk | February 26, 2009 |

The Rule 4 draft is, without question, one of the most important events of the year for Major League teams. One great draft can change the future of a franchise. The draft gives teams an opportunity to acquire young, talented players for a relatively small financial commitment. If one of them reaches the bigs, and becomes even an average player, you’ll garner yourself a ton of value over that player’s first six years.

Naturally, then, the draft, and studying the amateur players, is a major part of each organization’s yearly workload. Consider this response from Chris Long, Padres’ Senior Quantitative Analyst, in an interview with us last year:

What's so amazing about the baseball draft, and I'm sure the draft in other sports, is the sheer number of players to consider. Different ages, sizes, polish, playing environments, growth potentials, levels of competition faced, ability components, injury tendencies, and it goes on. Then there's the information you get from the scouts. Which scouts are better? Are they looking at the right players, in the right way, the right number of times? What's the best way to integrate all of the information you have? Overlaying all of this are considerations of finance, utility, need, risk and the poker game of the actual draft. Draft the right player and he could be worth $50 or even $100 million in value to your club (see Pujols). Draft the wrong players and you'll waste millions and negatively impact your club for years. It's an extremely difficult, messy, noisy, and thoroughly insane problem to work on. It's beautiful.

We all know about scouting. It's crucial to the game, especially in college and high school, and it isn't going anywhere. But a more unexplored area (at least on the 'net), and perhaps an equally important one, is the thorough analysis of college statistics. Many times, people will bring up what Chris brought up in the above passage, saying there are too many factors to consider, too much noise in the data. There's varying levels of competition, parks, player aging, limited sample sizes, switching from aluminum bats to wood, etc. It goes on and on.

They are, of course, right on the money. Looking at the raw stats of two college players is probably a hapless endeavor. Let's look at a quick, made-up example:

Player A: .300/.480/.680

Player B: .280/.420/.600

They are somewhat close, but if that's all we know about each player, we’ll probably go with Player A every time. But, let's say Player B played against the third-toughest opponents in Division 1 and also played in a big pitcher's park. Player A played in a small conference, against relatively weak competition, and a great hitter's park. Now who are you goin' with? And not to mention, this is a simplified example, which leaves out many significant factors. But it just serves as a reminder that the numbers, alone, are just numbers; they have relatively little utility in sorting out baseball players on the college level.

Anyway, as you can see, the reservations people have about college stats are real. However, there's no reason why we can't try to make some adjustments, and make some sense of the madness.

We've spent the last four months importing and adjusting collegiate baseball statistics in an attempt to neutralize the numbers to allow for cross-conference comparisons. To do this, we've discovered that Boyd's World is an invaluable tool. He gets much of the credit for accumulating a lot of the data and making it available online.

Now, our methods were actually pretty simple. We're judging the players in our system on a few things that we feel are a solid scope for the offensive skills necessary to succeed in professional baseball. They include:

- Weighted On-Base Average (wOBA)

- Isolated Power (IsoP; slugging percentage minus batting average)

- Strikeout percentage (K%; strikeouts divided by plate appearances)

- Walk percentage (BB%; walks divided by plate appearances)

- Speed score

All of the above are pretty self-explanatory, especially with the Wins Above Replacement explosion that happened in November and December of 2008 around the sabermetric blogosphere. However, the wOBA formula we used did not include stolen bases. Honestly, it wasn't for any particular reason, we just happened to grab the one copy of the formula that did not include it.

As for speed score, it's measuring "baseball speed," or, at least, that's the intended goal. It's actually a fairly generic speed score that is not much unlike the one Bill James used in his earlier works.

But, what do we take into account when adjusting these numbers? For us, it was park factors and level of competition faced. Those two components can vary from team-to-team in such a dramatic fashion that you'd initially swear they aren't right. For instance, Air Force had a 4-year park factor from 2005-2008 of 145. Conversely, a school like Longwood University had a park factor over that time of just 72. With such drastic discrepancies, it was important to address this. Again, drawing from Boyd's World, we have multiple-year park factors. He lists two for each team, one being a PF and one being TPF – or Park Factor and Total Park Factor. The former is just rating that team's home park, while the latter is rating all of the parks that team played in over the course of time it was tracked. So, Air Force's 145 park factor is just their home park. Playing in the Mountain West, they frequent some of the most hitter friendly parks in collegiate baseball, and their Total Park Factor was 128 from 2005-08. Basically, over those years, Air Force's team played in environments that were 28 percent more offense-friendly than a neutral ballpark, which would have a rating of 100.

To neutralize for park factors, we take the wOBA for each hitter, and simply run it through this: wOBA*square root(100/Total Park Factor). This nets us a Park-Adjusted wOBA (PAwOBA).

But that's just the first part of the components to neutralize. You also have to take into account the competition these numbers are being tested against. As mentioned previously, two stat lines, unadjusted, are not equal. Thankfully, Boyd's World comes through again with his Strength of Schedule ratings. To neutralize this, we do pretty much the same from above.

PAwOBA*square root(Strength of Schedule/100)

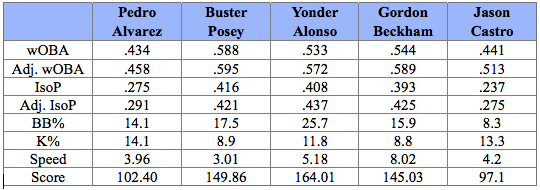

This gets us a wOBA for the players that are now both park and competition adjusted. We do this for IsoP's as well, using the same methods just substituting IsoP for the wOBA's. And before we jump straight to the table (even though this is going on long enough), we'd like to give a brief introduction to our "Score" category. We don't have a catchy name for it yet (although we're open to all suggestions), but what it encompasses is all of the categories that we're tracking. It weights the adjusted wOBA's, adjusted IsoP's, K and BB% and throws in our speed score, as well. But, we've rambled enough. On to the 2008 stats for the first five college bats taken in the 2008 Rule 4 draft:

A note on speed score: It's scaled down so it goes as such: -5 is terrible, 0 is bad, 5 is average, 10 is good, 15 is great, 20+ is flat-out burner.

The above are nothing more than just the 2008 numbers for the first five college bats taken last June. They are not meant to be a predictor of talent moving from aluminum to wood bats. Instead, it's just, at the moment, adjusting to see who had the best statistical seasons when you account for who they were playing and where. When the 2009 draft comes around, we'll have a better tool to judge player performance than just the raw stats, and hopefully it will shed some light onto who the top prospects are.

Also, don't forget that we haven't considered positional values or defense. A player's position is very important at this level. Players that start on the left of the spectrum (1b, left field, right field) have to hit a ton to make it in the bigs. Most great prospects start on the right side of the spectrum as amateurs and gradually shift to the left as they age, provided that their bats can play at those less-demanding defensive positions.

Additional Resources

Earlier in the article, it was mentioned that this type of stuff has been somewhat unexplored on the Internet. While that may be the case, there's certainly been plenty of research into the area:

- Right here at Baseball Analysts, Kent Bonham did some very similar work back in 2006.

- Jeff Sackmann, partnering with Bonham, runs collegesplits.com. He also does great work at The Hardball Times, much of it focusing on the college game and its numbers.

- This post at Sons of Sam Horn details how to go about some of these adjustments.

- Lincoln Hamilton, at Project Prospect, has also done some similar analysis.

Comments

I just want to say thanks to Myron for asking me to help out and major thanks to anything we linked to in the article. I am a big fan of Rich and all that is posted here.

That said, I am open and welcome any and all comments, suggestions, and criticsms about my methods. I am always looking to improve and know there are minds smarter than mine that may read this.

Posted by: Mike Rogers at February 26, 2009 1:04 AM

The Adjusted stuff really didn't make much difference IMO.

Posted by: Gen3blue at February 26, 2009 5:53 PM

Saying that "the numbers have relatively little utility in sorting out baseball players on the college level" is just plain false. And it's this kind of stone-age thinking that leads to great college players going undrafted every year in favor of strong fast guys who can't really play pro baseball that well.

First of all, a smart analyst already knows that there are differences by conference and can adjust for that in his mind. I have studied college stats considerably and I am well aware of the relative strength of each conference and division.

Secondly, the main stat that you should be looking for in a hitter is (BB & HBP) to SO ratio. The ability to draw walks and get hit by pitches is unaffected by park factors and type of bats. Furthermore, W/K ratio is the #1 indicator of the ability to adjust to a tougher level of pitching, not home runs, not SLG, not batting average. I guarantee that if a batter can control the strike zone and make contact he can hit almost any pitcher.

Posted by: Mark Rappaport at March 1, 2009 1:38 PM

Mark, I think you misinterpreted our comment. We meant that the *unadjusted* numbers mean very little at the college level. After making adjustments, they can become very useful, and that's what we did in the second half of the article. We made an adjustment for strength of opponents, just like what you're talking about.

I agree with you that bb/k ratio is important. But you can't leave power out of the equation, or contact ability, or numerous other things. Who are you going to take if two guys have equal bb/k ratios, but one has twice as much power?

I'm pretty sure that you could find a lot of good college hitters, who controlled the strike zone but did little else, who faded away as they tried to move up the ladder in pro ball.

Posted by: Myron Logan at March 1, 2009 1:57 PM

I agree with what you say, and I didn't mean to imply that BB/SO ratio was the only thing I look at. Having a good overall OBP is important and of course extra base hits are good. What I mean is more along the lines of this: if you look at a group of hitters with similar slugging percentages, the guys with the better BB/SO ratios will always be the better hitters. Furthermore, a hitter who can draw walks and post a .400 OBP or better is always a valuable hitter even if he never hits an extra base hit.

Posted by: Mark at March 3, 2009 12:29 PM

I know that this is a couple days late, but Mark, my system generally shows that if hitters have similar power numbers, the ones that walk more than the others are rising to the top. That'll show in the wOBA and in their BB% -- both of which I use in my 'score' which is based on adjusted wOBA and IsoP's as well as my speed score and BB/K%'s.

Posted by: Mike R at March 5, 2009 1:17 AM