| Command Post | November 16, 2007 |

Last time I checked in, I looked at the percentages of fastballs thrown to different types of hitters based on the count. Toward the end of that article, I threatened to try to predict via regression when a pitcher would throw his fastball and this article is the preliminary result of that threat. What I wanted to do was find whether a pitcher threw a fastball or not, a binary variable, based on a particular list of factors, which was made up of both continuous and discrete variables. Regular linear regression can't handle binary dependent variables, but there is a special type of regression, logistic regression , that is designed for just this type of analysis. Given an dependent variable and one or more independent ones, a logistic regression will solve for the logarithm of the odds that a binary event is going to occur. Unlike linear regressions, where the relationship between the dependent variable and independent variables is somewhat obvious based on the generated coefficients, the coefficients created from logistic regressions are more confusing because they're really referring to the log of the odds of the event happening. The methods of a logistic regression are similar to a linear one, in that it models the relationship between several variables, it just does so in a less straightforward fashion.

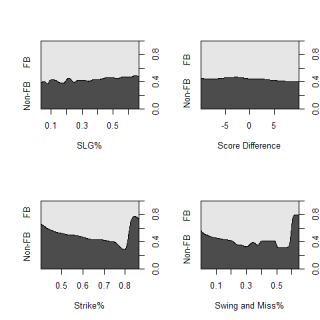

While that's sinking in, I'm going to backtrack a little. Before getting into the messiness of regressions, I wanted to see if there were any easy correlations to spot. The conditional probability charts below give a good idea of the magnitudes for possible ranges for FB%.

These charts graph the chance that a pitcher will throw a fastball on any pitch, based on one continuous variable. As slugging percent increases, the likelihood of seeing a fastball obviously decreases and there is an very (very) slight increase in the probability of throwing a fastball at the extreme ends of score differential. The two graphs on the bottom use two indicators of the quality of a pitcher's fastball. The graph on the left uses the percentage of a time the fastball is thrown for a strike while the one on the right uses the number of swings-and-misses generated as a percent of total swings taken at the fastball. Unfortunately, both graphs have several small sample outliers on the right that skew the graphs, but overall the trends are pretty strong and obvious. Good fastballs, both in terms of location and "nastiness" will be thrown frequently and these plots give an indication about what factors may be related to the likelihood of throwing a fastball.

Getting back to the regression, the first variable I tested was the 2006 slugging percent of the batter. Clearly there is a relationship between the amount of fastballs a hitter sees and his quality (I've beaten this point into the ground), but how strong is it? The coefficient for SLG was -.77, so for every .010 increase in SLG, the likelihood of seeing a fastball increases by .19 percent. This doesn't seem like that big of an impact, but is still a significant predictor of FB%. According to my regression, the factors that relate to the quality of a pitcher's fastball, the strike% and swing and miss% are also both significant factors if a pitcher threw a fastball.

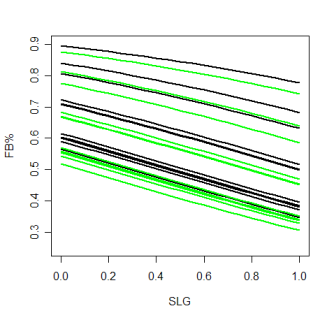

Categorical variables, such as the count or the situation with base-runners are also important. This is again, a very obvious point, but as opposed to just looking at hitter's counts vs pitcher's counts, and saying certain types of batters see more fastballs in each type of count, with the regression, I can estimate what percentage of fastballs any type of hitter will see in any specific count. The chart below, which is a little confusing, attempts to do exactly that and also account for the quality of the fastball being thrown.

The green lines represent the estimated FB% in each count over a range of hitter abilities, for a fastball that gets a below average number of swings-and-misses. Looking just at the green lines, there are three relatively distinct bands. The top three lines (roughly starting around .8) are 3&0, 3&1 and 2&0, which are the three biggest hitter's counts. There are actually four separate counts in the next two distinct green lines (starting around .7), 3&2, 1&0, 0&0 and 2&1. The bottom cluster of lines has the remaining counts, 1&1, 0&1, 0&2, 2&2 and 1&2. These groupings end up matching pretty well with the groupings of counts found here.

The black lines on the graph are estimates of the exact same thing (FB% in a given count over a range of SLG), but they are for pitches that have a higher than average swing-and-miss%. The ranges of different counts are the same so this just shows the range where most MLB pitchers would lie.

Before I wrap this up, I have a caveat to add. I only recently learned about logistic regression, so it's entirely possible that there is a problem with my methods. If anyone sees something I butchered with the regression, let me know and I'll fix it. I don't think this is the case or I wouldn't be publishing my results, but fair warning.

The differences I'm looking at right now are mostly marginal, especially at the ranges MLB players perform at. The three bands of counts are distinct in the FB% that pitcher's throw, but within each band, its very tough to see any differences. The next step with this type of analysis is to break down pitch selection based on potential swings in win expectancy. Win expectancy would account for score difference, base-runners, and outs, which are very important in determining how a pitcher pitches. The quality of the on-deck hitter is probably important as well.

On an individual pitcher level you could also potentially see more variation within a specific count. If Josh Beckett is throwing 70% fastballs in a 0&0 count while other Josh Beckett-types (pitchers with three pitches and a similar quality fastball) are throwing 60% fastballs in that count, that could be very valuable. Those numbers are for illustration, but a discrepancy like that would be important.

Comments

At some point, Joe, and it won't be easy, you want to look at some more "subjective" predictors of pitch selection. When I watch a game, I am notorious for predicting the next pitch. Seriously. I am uncanny. But I would have a really hard time programming a computer to do what I do even if it had all the requisite information. And it won't (have all the requisite information). As you know as a programmer, some types of intelligence are hard to program. Not necessarily impossible, but difficult. This is one of them.

Some things you might want to look at eventually. Does a pitcher truly randomize his pitches? It might look at first glance like he does, but you would have to run a chi-squared test or something like that, or look at the percentage of various pitches after various sequences (which the chio-square test essentially does). Human beings have a notoriously difficult time randomizing their actions. I suspect that is the case for pitchers and catchers. For example, in poker, when you are supposed to, according to game theory, randomize your bluffs or your calls in the face of a potential bluff, accorsing to some particular fixed odds, as determined by the size of the bet and the pot, you are advised to pick certain cards that are in your hand or on the table (or something like that) in order to do the randomization. If you don't do that, it is REALLY hard to randomize something. I mean, how do you say to yourself, O.K., I am going to do this (say throw a fastball) 40% of the time, on each and every opportunity (like a pitch).

So, for example, some pitchers, and they are not going to be as successful, are going to, say, throw 3 fastballs in a row, and say to themselves, "Well, it is time for a curveball," and throw one 80% or 90% (or 50%) of the time after those 3 fastballs, even though the game and count situations call for a fastball 80% of the time (which was probably why you threw one 3 times in a row). The more successful pitcher is going to throw that fastball 80% of the time whether he has not thrown one yet or he has thrown 10 in a row, as long as the situation calls for an 80% fastball. That is difficult to do. Human nature encourages us to eventually throw a curve after we have thrown x fastballs in a row. IOW, some pichers will be better at randomizing their pitches than others (and hence more successful) and this is hard to ascertain without the proper research and tests. Just because a pitchers averages 60% fastballs at all times when he should be throwing 60% fastballs does NOT mean that he is randomizing that 60% properly. That is one of the keys to look at.

As far as being able to predict pitches when watching games, a lot of it has to do with how players "look" on previous pitches. For example, if a batter (especially certain batters) look really stupid chasing an outside and low off-speed pitch, there is a good likelihood that he is going to get another one. It depends a little on the pitcher and catcher though. For example, Varitek is notorious (according to my observations and opinion) at NOT doing that. He does not like to be predictable even when a batter is not likely to take advantage of the pitcher being predictable.

Another similar example, is when a batter is right on a particular pitch but fould it off or hits one foul down the line. The pitcher is less likely to throw that same pitch.

And of course, even though each pitcher has certain overall percentages, those percentages will vary A LOT for each batter, depending on the scouting report on the batter. For example, when a pitcher gets ahead of Willy Mo Pena, any pitcher, he throws A LOT of off-speed pitches. Willy Mop cannot hit them and cannot seeem to lay off them off the plate (and seems to be looking fastball on every pitch). Another example is everyone throws Jason Varitek (at least towards the end of the season when he supposedly is notoriously slow-batted) high and away fastballs and offspeed under the hands.

Anyway, a few things among many to think about when analyzing pitchers and pitches (and batters). This is an extrememly complicated thing to do (and one that has and will ultiamtely bear many fruits) and I applaud your efforts so far!

Posted by: MGL at November 16, 2007 9:22 PM